Self-Attention Mechanism: Complete Learning Guide

Introduction

Self-attention is the core mechanism that powers modern Transformer architectures and Large Language Models. This guide will take you from zero to mastery.

What is Self-Attention?

Self-attention allows each word in a sequence to attend to all other words, creating rich contextual representations. The mechanism computes:

- Query (Q): What information am I looking for?

- Key (K): What information do I contain?

- Value (V): What information do I actually pass along?

Mathematical Foundation

The self-attention formula is:

Attention(Q, K, V) = softmax(QK^T / √d_k)VWhere:

- Q, K, V are the Query, Key, and Value matrices

- d_k is the dimension of the key vectors

- The division by √d_k stabilizes gradients

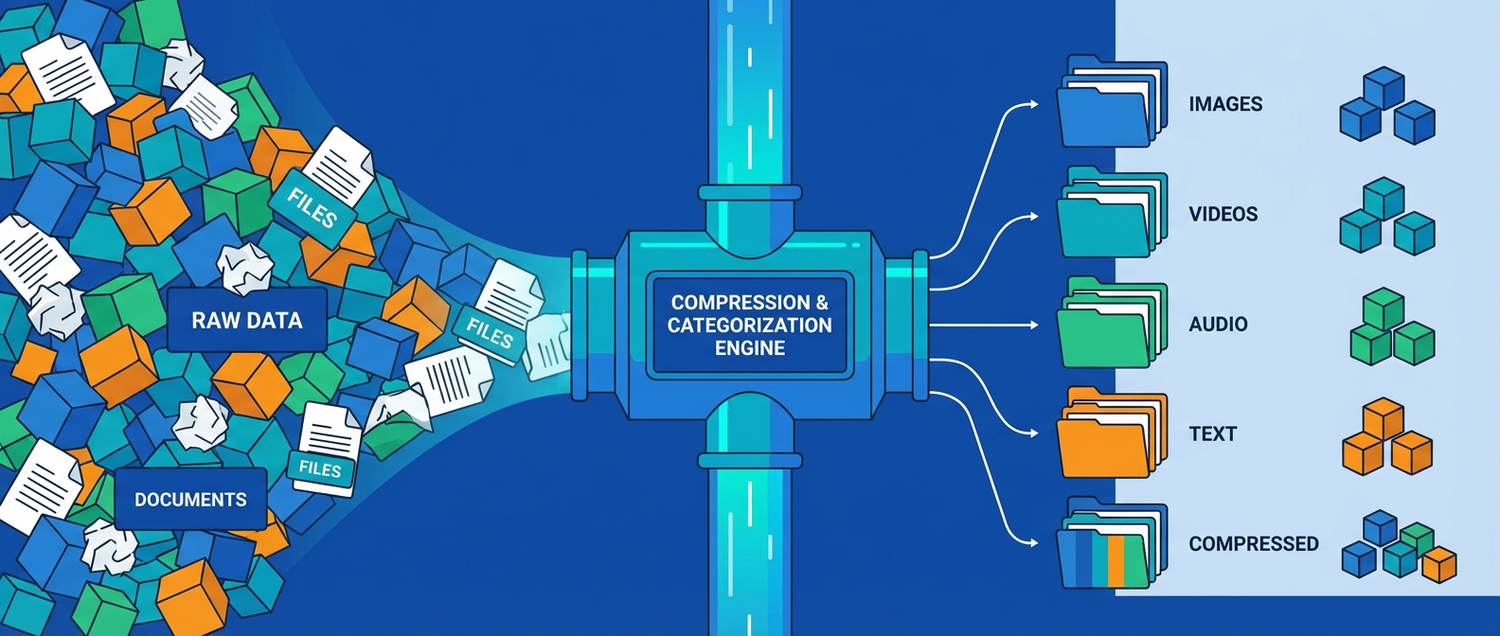

How It Works

- Linear Projections: Input embeddings are projected into Q, K, V spaces

- Attention Scores: Compute similarity between queries and keys

- Softmax Normalization: Convert scores to probabilities

- Weighted Sum: Multiply attention weights by values

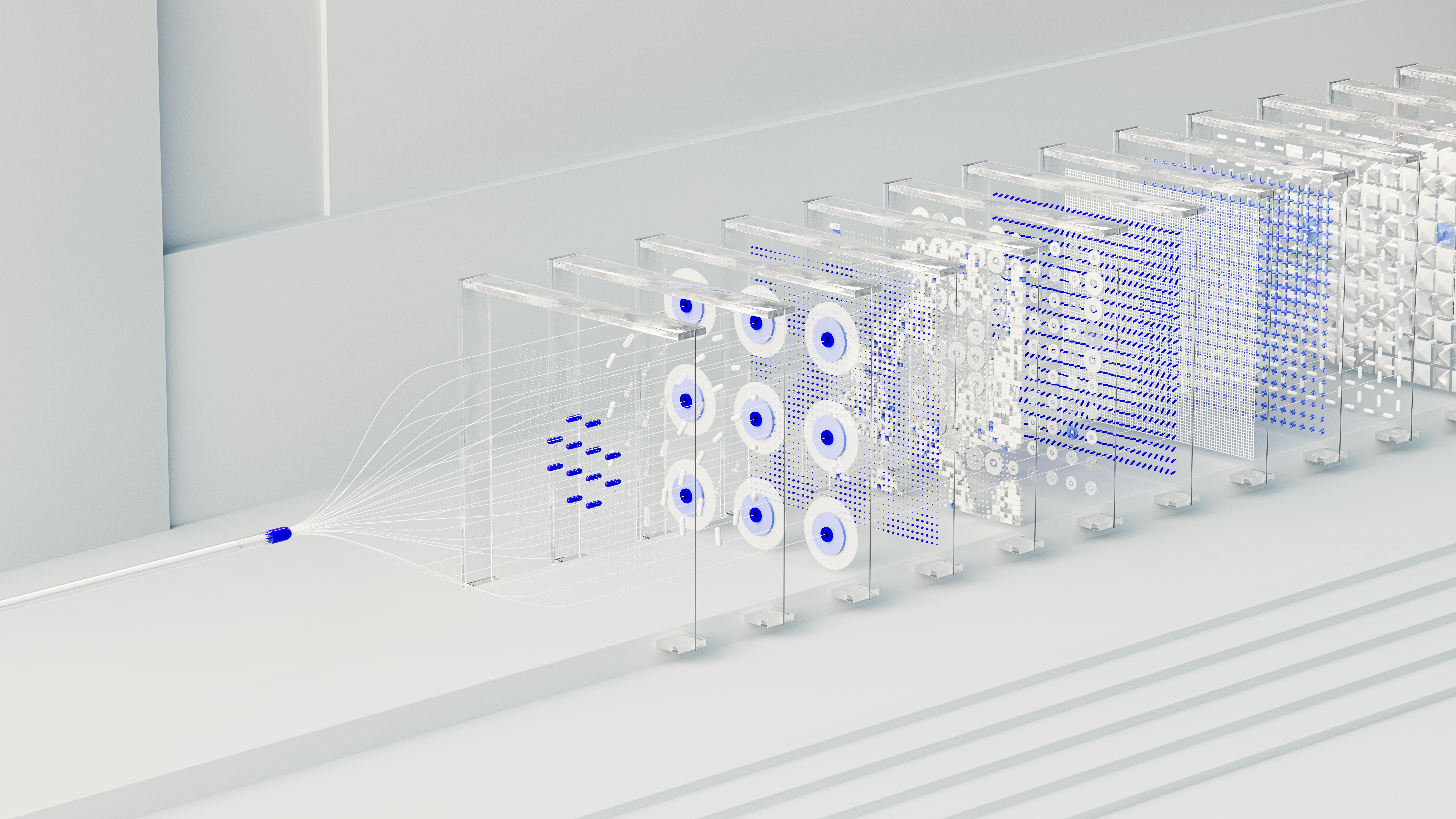

Multi-Head Attention

Instead of single attention, we use multiple attention heads in parallel:

MultiHead(Q, K, V) = Concat(head_1, ..., head_h)W^OEach head learns different relationships:

- Head 1: Syntactic dependencies

- Head 2: Semantic relationships

- Head 3: Long-range dependencies

Practical Applications

Self-attention is used in:

- Language translation

- Text generation

- Question answering

- Code completion

- Image understanding

Key Advantages

- Parallelization: Unlike RNNs, can process entire sequence at once

- Long-range Dependencies: Direct connections between all positions

- Interpretability: Attention weights show what the model focuses on

Learn More

Explore our interactive 3D visualization to see self-attention in action!

XIA LEI

XIA LEI